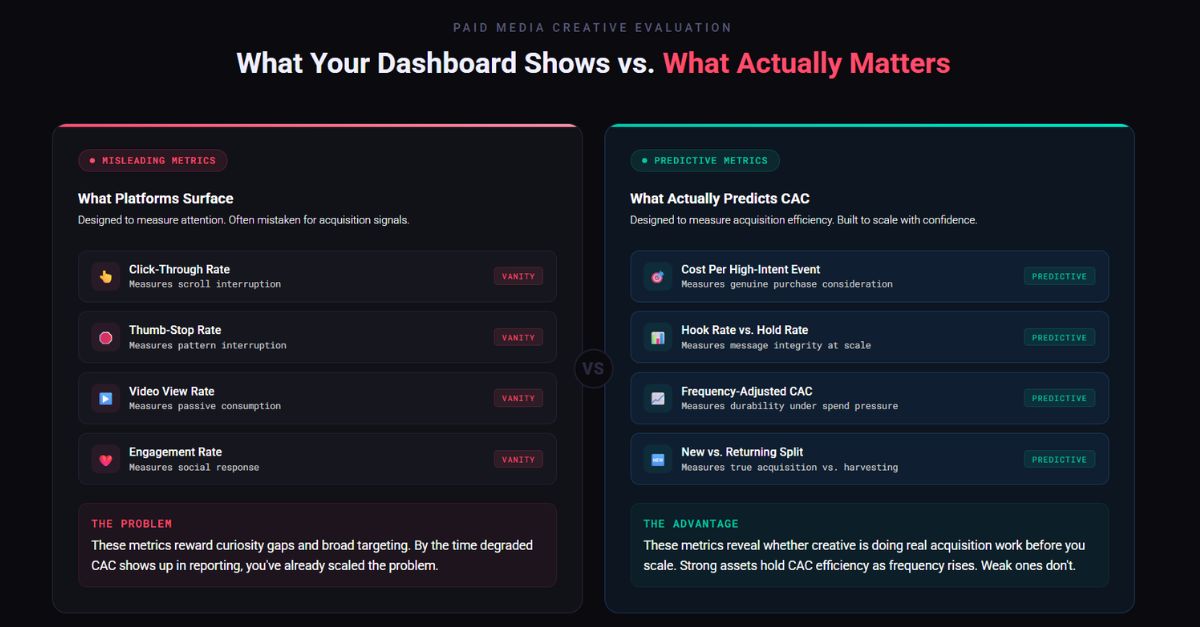

TL;DR: High CTR doesn’t equal efficient growth. The engagement metrics platforms spotlight are built to measure attention rather than acquisition efficiency, so scaling based on them alone is how CAC quietly spikes. The brands that scale sustainably may make better ads, but they ALSO build smarter systems to measure what actually drives profitable customer acquisition. To protect performance and get better clarity, evaluate creative on metrics tied to intent and revenue:

- Cost per high-intent event

- Frequency-adjusted CAC

- New vs. returning customer mix

- Landing page conversion rate by creative.

Every performance marketer has been burned by this at least once.

A creative asset clears every benchmark you’ve set. Strong CTR. Healthy thumb-stop rate. The platform is flagging it as a top performer. You scale it up, and two weeks later, your CAC (customer acquisition cost) has crept up 30% and you’re scrambling to explain it to leadership.

The problem isn’t that you made a bad call. The problem is that the metrics most marketers use to evaluate creative were designed to measure engagement, not acquisition efficiency. And those are very different things.

After analyzing creative performance across hundreds of campaigns and tens of millions in direct-response spend, I’ve found that the metrics platforms surface most prominently are often the least predictive of a sustainable acquisition cost.

Here’s what I actually look at instead for greater clarity.

Why dashboard metrics mislead you

CTR, thumb-stop rate, and video view rates are attention metrics. They tell you whether your creative interrupted the scroll, not whether it attracted the right person or set accurate expectations for what comes after the click.

A creative that overpromises, uses extreme curiosity gaps, or targets broad audiences for cheap clicks can post spectacular engagement numbers while quietly destroying your conversion rate and downstream customer quality. By the time that shows up in your CAC, you’ve often already scaled it.

The gap between “this ad performed well” and “this ad acquired customers efficiently” is where most brands lose money on creative.

For a broader view on how attention metrics and AI impact search and discovery, check out 15 SEO Predictions for 2026.

The metrics I actually use

1. Cost per high-intent event

CTR tells you people clicked. A high-intent event tells you people believed what they saw. This could be:

- A form start

- A product page visit with meaningful dwell time

- A pricing page view

- Any action that signals genuine purchase consideration

When a creative drives strong clicks but weak mid-funnel progression, the ad is attracting curiosity, not intent.

A high hook rate with a low hold rate is a red flag. Your opening frame is doing all the work and the creative will spike and drop fast.

2. Hook rate vs. hold rate

Most marketers track one or the other. Hook rate (the percentage of people who watch past the first three seconds) tells you the opening landed. Hold rate (average watch time as a percentage of total video length) tells you whether the rest of the creative justified the attention you captured.

A high hook rate with a low hold rate is a red flag. It means your thumbnail or opening frame is doing the heavy lifting, and the actual message isn’t resonating. Creative like this tends to have a short performance shelf life; it spikes and drops fast.

3. Frequency-adjusted CAC

Raw CAC is a lagging indicator. Instead, track how CAC trends as frequency climbs on a specific creative:

- Assets with strong fundamentals hold efficiency as frequency rises

- Assets propped up by novelty fall apart quickly

- This is one of the most reliable signals for whether a creative will scale or stall

4. New vs. returning customer split

This one is underused. Strong volume means nothing if most of those conversions are coming from existing customers or recent site visitors. That’s not an acquisition. That’s demand harvesting. Know the difference before you call something a winner.

How to build a testing framework around these metrics

Knowing which metrics matter is only half the equation. Here’s how to evaluate them consistently:

- Set thresholds before you launch, not after. Deciding what “good” looks like mid-test introduces bias. Benchmark by format and objective in advance.

- Spend enough to be meaningful. Calling a winner on 200 clicks is one of the most common and costly mistakes in paid social. Tie minimum spend thresholds to your conversion volume, not arbitrary timelines.

- Isolate your variables. If you’re testing creative, hold targeting, placement, and landing page constant. Mixed variables make results unreadable.

- Track degradation, not just peak performance. A creative that holds for six weeks beats one that spikes and flatlines in ten days every time. Build degradation curves into your reporting so you can see fatigue coming.

What this means for brands

The brands that consistently acquire customers at efficient CAC don’t just have better creative; they have better systems for evaluating creative. They treat their creative pipeline as a performance asset with the same rigor they’d apply to bidding strategy or audience segmentation.

That means giving creative teams data they can actually act on, not just engagement metrics that feel good. It means building feedback loops between what the platform reports and what actually shows up in your unit economics. And it means making creative decisions based on acquisition outcomes, not attention proxies.

The gap between a creative strategy that looks good in a dashboard and one that actually moves the business forward is measurable. You just have to know where to look.

This may seem like a lot to layer into your current workflow, but it’s exactly where our paid media team thrives. We help brands build creative evaluation systems that connect platform performance to real business outcomes.

Ready to stop guessing and start scaling with confidence? Let’s talk.